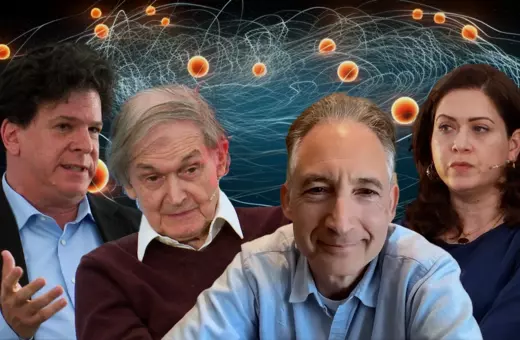

An ancient idea is still alive in the most advanced theory of physics today: that matter consists of a set of ultimate particles. But the obsession with going beyond the Standard Model and finding more and more particles to solve the problems of physics is victim to a theoretical and experimental framework that has run its course. Sabine Hossenfelder, Gavin Salam, and Bjørn Ekeberg debate the future of particle physics at HowTheLightGetsIn festival.

The question of what the material world around us is made of, and whether that stuff - matter - is infinitely divisible or if at some point we reach the pixels of existence started out as a philosophical one. Democritus, born around 460 BC, thought he could answer it using reason alone. Matter couldn’t possibly be infinitely divisible, theorised Democritus. It therefore consisted of “atoms”, from the Greek word for indivisible – the ultimate units of matter that could not be broken down any further. This ancient idea is in still alive in the most advanced theory of physics today, The Standard Model. Particle physics may have given the name “atom” away prematurely, as we later discovered that what we today call atoms do have constituent parts, other particles - electrons, protons, and neutrons, which in turn are made of other, more fundamental particles. In total, the Standard Model inventory contains a total of 17 particles.

The contemporary search for the fundamental building blocks of the universe has been driven by a reductionist philosophy: get to the bottom of what everything is made of, and we will finally understand the mysteries of the cosmos. It’s true to some extent, the Standard Model represents one of the most successful scientific theories ever devised, giving us an extraordinary ability to manipulate matter. Its predictions have been tested experimentally probably more than any other theory, and despite talk of cracks in the theory, for the most part it has proved incredibly robust. And yet, particle physicists want to dig deeper, build bigger accelerators, and search for particles that could help solve problems like dark matter and dark energy. But not everyone thinks that’s a good idea.

___

The term “particle” is part of a theoretical and experimental framework, and the meaning of “electron”, “photon”, “fermion”, “quark” etc. only make sense with reference to those two frameworks.

___

During the debate “Particles, Physics and Fairy Tales” at London’s HowTheLightGetsIn festival, theoretical physicist and YouTuber extraordinaire Sabina Hossenfelder, distinguished particle physicist Gavin Salam, and radical philosopher of science Bjørn Ekeberg, grappled with the question of whether particle physics has run its course.

The starting point of any discussion about particle physics today should be an account of what we’re even talking about when we’re talking about particles. Particle physicists may have a pretty good idea of what particles are, “they at least have that going for them”, Sabina Hossenfelder joked on stage, but explaining to a non-physicist what particles are is far from straightforward. Physics is well past Democritus’ version of atoms as irreducible bits of matter. The term “particle” is part of a theoretical and experimental framework, and the meaning of “electron”, “photon”, “fermion”, “quark” etc. only make sense with reference to those two frameworks. When asked what a particle is, Sabina Hossenfelder answered “an irreducible representation of a Poincare group”. Since abstract algebra and group theory in particular is the language of the Standard Model, the meaning of its key theoretical terms is best defined through mathematics, which famously doesn’t translate into natural language all that easily.

___

Join the conversation