‘Longtermism’ and ‘Effective Altruism’ have become buzzwords within certain circles. While concern for future people sounds morally intuitive, nothing concrete is being offered by those who claim we should care for the distant future, writes Ben Chugg.

In the 1970s, the philosopher Peter Singer catalyzed a moral revolution by arguing that we should do more to help the global poor. His essay, Famine, Affluence, and Morality, argued that distance should not affect our moral decision-making. A malaria stricken child is equally deserving of our attention whether they are our next-door neighbors or 20,000 kilometers away.

SUGGESTED READING

How To Choose Your Dream Job Like an Effective Altruist

By William MacAskill

SUGGESTED READING

How To Choose Your Dream Job Like an Effective Altruist

By William MacAskill

Singer’s arguments made, and continue to make, a huge impact. He founded the Life You Can Save, which has directed thousands of people to make more globally conscious choices with their donations. He influenced the Giving Pledge, which commits billionaires to giving away a majority of their wealth. He is widely acknowledged as the father of the animal welfare movement and widely cited as the most influential living philosopher. It was no surprise when, in 2021, he was awarded the Berggruen prize for his work on practical ethics.

Perhaps most significantly, Singer inspired the Effective Altruism (EA) movement, which seeks to use “evidence and reason to figure out how to benefit others as much as possible, and taking action on that basis." Since its inception in the early 2010s, EA has been responsible for millions of dollars of funding towards causes such as preventing blindness from Trachoma, curing obstetric Fistula, providing anti-malarial bednets, and sending direct cash transfers to the world’s most impoverished. EA has campaigned for better conditions for animals in factory farms and inspired some of the world’s wealthiest individuals to donate more of their wealth.

In the last few years, however, EA has slowly moved in another direction — one that is less convinced by Singer’s arguments to help the global poor. Indeed, we might conceive of Singer’s influence as the first wave of EA. The second wave is focused on longtermism, the idea that “positively influencing the far-future is a key moral priority of our time.”

___

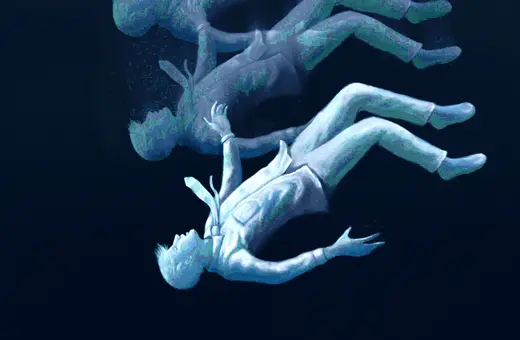

Imagine someone from the year 1000 trying to influence the present. Our circumstances would be beyond imagination, our problems unrecognizable, our desires mysterious.

___

Longtermism argues that, just as we shouldn’t care about where a person lives, we also shouldn’t care about when a person lives. It holds that because there may be many more people alive in the future than there are today, the future is where the weight of our moral obligations lie. Adherents of longtermism — longtermists — go on to argue that, because of this temporal agnosticism, we should be much more concerned with the future than the present because of the sheer number of people who may yet be born. If humans survive even as long as a typical mammalian species then there could be trillions upon trillions of future humans. This means that our moral concern should be largely focused on the future.

Perhaps the leading spokesperson for longtermism is William MacAskill, a young, energetic professor of philosophy at Oxford who was one of EAs founding members. MacAskill makes the case for longtermism in his book, What We Owe the Future. Like Singer, he is trying to shake the moral ground beneath our feet.

Join the conversation