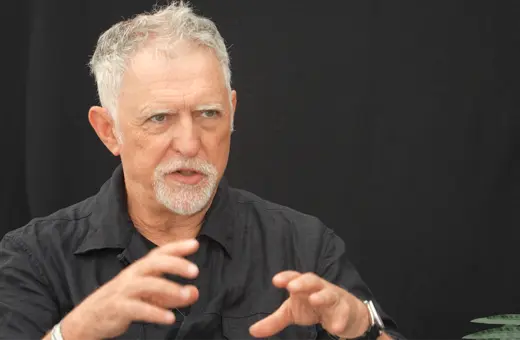

Our default intuition when it comes to consciousness is that humans and some other animals have it, whereas plants and trees don’t. But how sure can we be that plants aren’t conscious? And what if what we take to be behavior indicating consciousness can be replicated with no conscious agent involved? Annaka Harris invites us to consider the real possibility that our intuitions about consciousness might be mere illusions.

Our intuitions have been shaped by natural selection to quickly provide life-saving information, and these evolved intuitions can still serve us in modern life. For example, we have the ability to unconsciously perceive elements in our environment in threatening situations that in turn deliver an almost instantaneous assessment of danger — such as the intuition that we shouldn’t get into an elevator with someone, even though we can’t put our finger on why.

But our guts can deceive us as well, and “false intuitions” can arise in any number of ways, especially in domains of understanding — like science and philosophy — that evolution could never have foreseen. An intuition is simply the powerful sense that something is true without having an awareness or understanding of the reasons behind this feeling — it may or may not represent something true about the world.

___

It’s possible for a vivid experience of consciousness to exist undetected from the outside

___

And when we inspect our intuitions about consciousness itself — how we judge whether or not an organism is conscious — we discover that what once seemed like obvious truths are not so straightforward. I like to begin this exploration with two questions that at first glance appear deceptively simple to answer. Note the responses that first occur to you, and keep them in mind as we explore some typical intuitions and illusions.

1) In a system that we know has conscious experiences — the human brain — what evidence of consciousness can we detect from the outside?

2) Is consciousness essential to our behavior?

These two questions overlap in important ways, but it’s informative to address them separately. Consider first that it’s possible for conscious experience to exist without any outward expression at all (at least in a brain). A striking example of this is the neurological condition called locked-in syndrome in which virtually one’s entire body is paralyzed but consciousness is fully intact. This condition was made famous by the late editor-in-chief of French Elle, Jean-Dominique Bauby, who ingeniously devised a way to write about his personal story of being “locked in.” After a stroke left him paralyzed, Bauby retained only the ability to blink his left eye. Amazingly, his caretakers noticed his efforts to communicate using this sole remnant of mobility, and over time they developed a method whereby he could spell out words through a pattern of blinks, thus revealing the full scope of his conscious life. He describes this harrowing experience in his 1997 memoir The Diving Bell and the Butterfly, which he wrote in about two hundred thousand blinks.

Join the conversation