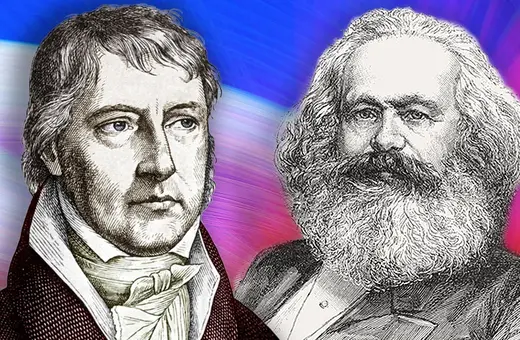

We are infatuated with data and quantitative methods, preferring decision by calculation over human wisdom, even when data is unreliable and a product of our models. 25 years on since the first edition of his foundational work on the rise of ‘data-driven decision making’ in public life, ‘Trust in Numbers’, Theodore M. Porter picks up the case.

Americans, at least, are caught up now in an epidemic of politicized deceit, extending to untruths so glaring that any effort at reasoned refutation seems pointless. The poster child for this mode of talk is (at least for the moment) our president. How can an educated populace care so little for what to most decently educated people appears as settled knowledge?

Ordinary citizens are likely to receive basic instruction on the pursuit of knowledge in pre-college science classes, typically as rules of "scientific method." Philosophers and social scientists who write on science are mostly skeptical of doctrines of such method talk. At best, it says something about testing, bypassing entirely the fundamental problems of framing a hypothesis that is worth testing.

Getting good numbers, especially for complex problems, is anything but straightforward.

Beyond that, really serious problems like trying to understand and control an epidemic involve issues that go far beyond testing hypotheses. Scientists at work are more likely these days to speak of modeling than of hypothesis testing. Modeling has to do with establishing the structure of the problem at issue. In much science that really matters, even the data are enveloped in doubt. It is still possible for researchers to make persuasive arguments about complex issues, but much of what seems simplest may well be misleading. Numerical data, often described nowadays as the bedrock of science, may be all the more deceptive just because it seems basic and unfiltered. In reality, the relationship of data and models goes in both directions is. That is, data often is nothing like bedrock. In complex science, the model is often required to fix values for the data.

"Scientific method" has a formulaic character that makes science sound simple, and may encourage the idea that legitimate science can be recognized by anyone. The celebration of data, so typical or our own time, is also a bit like this, a simplifying move. Even competent scientists sometimes endorse it unthinkingly. Getting good numbers, especially for complex problems, is anything but straightforward.

An example like Covid-19 reveals how abundant and mundane the obstacles to reliable data can be. They may have more to do with bureaucratic irregularities than scientific mysteries. The rules for registering sickness and death, for example, vary at every level, from the town or county to the nation. The recording of deaths may be delayed on weekends or when the victims of disease are away from home, and a cause of death may remain undetermined if the victim was never taken to a hospital. Diagnosis of the sick, of course, depends on testing regimes and on the accessibility of medical personnel. Even Trump, a canny but unskilled statistical meddler, came to realize that he could reduce the measured incidence of Covid simply by testing less. He made no effort to conceal his motives but seemed rather proud of his little discovery, and he encouraged his supporters to back his efforts to limit information. What would remain unknown, the identities of many human disease carriers, might well occasion an increase in sickness and death by promoting the spread of the disease. But first it would create a momentary dip in the disease curve, and that, for him, was reason enough.

Join the conversation