We’re often seduced by the idea that the mind is a computer, and that consciousness is just a matter of running the right code. But philosopher Peter Godfrey-Smith, renowned for his work on octopus minds, disagrees. Fresh research into animal minds—from bees to jellyfish—suggests that consciousness arises not from software but from electrical oscillations moving rhythmically across cell membranes in living brains. And those oscillations, Godfrey-Smith argues, are unlikely to be reproducible in artificial hardware. Perhaps, then, only living brains can truly be conscious.

Late in the previous century, there seemed to be good reasons to think that the physical make-up of a system could not matter much to whether that system had a mind. The organization of the system is what matters, people thought, and physically different systems can be organized the same way. As a result, artificial minds making use of ordinary computer hardware should be possible. This whole discussion was hypothetical, because there weren’t any convincing possible cases of artificial minds to worry about.

Since then, two things have happened. From around 2022, we’ve been confronted with candidates for artificial minds that are disturbingly impressive. These are the LLM systems, such as ChatGPT. But reasons have emerged to doubt that the physical make-up of a system is irrelevant and minds are “substrate independent.” A view sometimes called biological naturalism holds that the biological details of nervous systems might make a difference to whether a physical system has a mind. (The term was coined, with this sense at least, by John Searle.) But if nervous systems and brains are special, what is it that makes them special?

___

What a system does, its organization and processing, is dependent on its physical make-up in ways that matter to having a mind.

___

This question can be asked for different aspects of mentality. Perhaps an artificial system using computer hardware can be genuinely intelligent but without feeling anything. Perhaps biology matters to feeling but not to intelligence. Alternatively, one might think that something like a living brain is needed for genuine intelligence (or intelligence beyond a very low level), and much of the apparent intelligence in today’s AIs is illusory.

Here I will look mostly at the first option, the idea that the make-up of nervous systems is important to felt experience and less important to intelligence and cognition. These two sides can’t be completely separate, but perhaps the difference that a nervous system makes to the cognitive side of the mind is rather fine-grained, whereas it makes a big difference to the possibility of felt experience or consciousness.

Biological naturalism is not the idea that two systems that do exactly the same things, internally and externally, but have different physical make-ups, can differ in whether they are conscious. The claim is that what a system does, its organization and processing, is dependent on its physical make-up in ways that matter to having a mind. A brain hasdifferent activities going on inside it than a computer does, however the computer is programmed. The rival view is that the differences between a living system and a computer are real, but don’t matter.

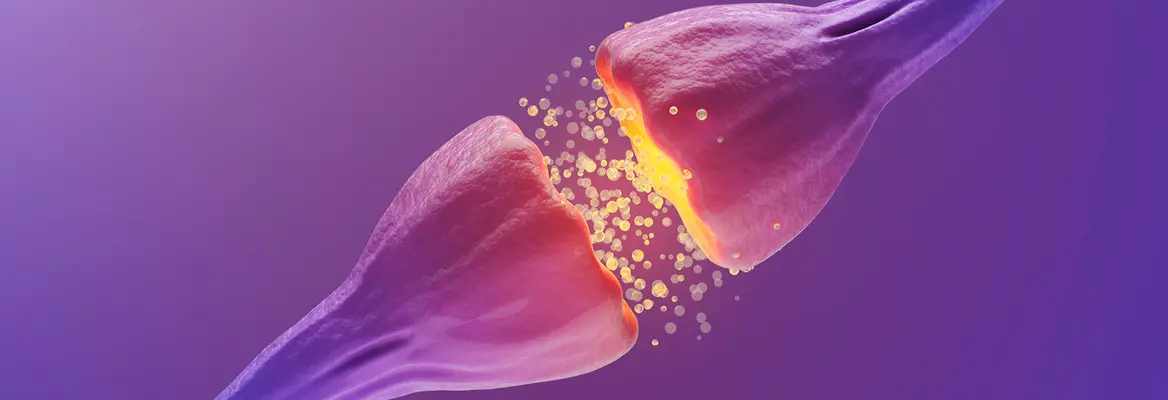

A brain is made up of living cells, bounded by membranes, consuming glucose, interacting through tiny releases of chemicals, fed from a blood supply, and so on. Which of the details of how the brain does things might be important to consciousness? I’ll look at one view in this area, a hypothesis I see as likely to contain at least some truth.

Join the conversation