While ChatGPT might be a "bullshit generator," it has unique usefulness in handling mundane or meaningless tasks, providing relief to those burdened by such work, writes Alberto Romero.

ChatGPT is a bullshit generator. You’ve heard that before, haven’t you? Well, unlike probably all the other times someone’s said it, I don’t mean it as a put-down but as a compliment. “Bullshit generator” suits ChatGPT because its output doesn’t hold any “regard for truth.” ChatGPT doesn't lie, which is an intentional attempt to conceal the truth. It generates text that happens to be either right or wrong—but only incidentally. Whatever you need it to say, it will oblige with the appropriate prompt. I used to think that was bad.

Why do we want such a shapeless, opinion-less tool, you may ask? A tool that can't differentiate between real stuff and the vast array of imaginary alternatives. A tool that can learn anything, possible and impossible, and will inadvertently and spontaneously confabulate facts about the world. Well, I no longer think that’s bad. It turns out this handy property—automated bullshitting—is singularly useful nowadays. ChatGPT may not be the end of meaning, as I've often wondered, but quite the opposite: The end of meaninglessness. Let's see why.

___

It’s hard to say objectively which work has value and which doesn’t, but it’s easy for each of us to answer this question: Do you spend time on tasks you don’t like, that you believe don’t really need to be done?

___

ChatGPT as a means to recapture sanity

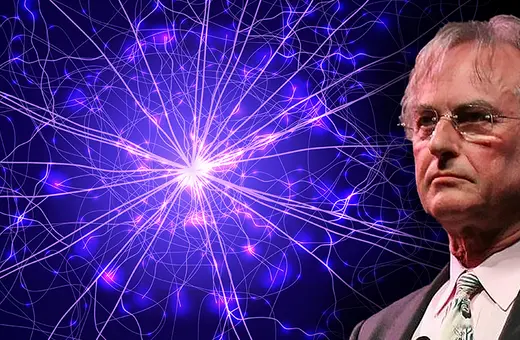

Douglas Hofstadter has been giving mixed comments about ChatGPT and GPT-4 recently. Two weeks ago he publicly admitted to being terrified that AI will eventually eclipse us at everything (a fear that apparently goes back a decade). Yet a few days ago he wrote:

“I frankly am baffled by the allure, for so many unquestionably insightful people (including many friends of mine), of letting opaque computational systems perform intellectual tasks for them. Of course it makes sense to let a computer do obviously mechanical tasks, such as computations, but when it comes to using language in a sensitive manner and talking about real-life situations where the distinction between truth and falsity and between genuineness and fakeness is absolutely crucial, to me it makes no sense whatsoever to let the artificial voice of a chatbot, chatting randomly away at dazzling speed, replace the far slower but authentic and reflective voice of a thinking, living human being.”

In trying to find coherence in his statements, I posted the above excerpt on Twitter. Interestingly, no one pointed out Hofstadter’s conflicting views. Instead, some suggested he may be too disconnected from the struggles of common people. Accordingly, the most liked response was this:

“The problem is that most of us don't get to live in a purely thoughtful, intellectual environment. Most of us have to live with jobs where we’re required to write corporate nonsense in response to corporate nonsense. Automating this process is an attempt to recapture some sanity.”

I kinda agreed with this more quotidian, relatable perspective—who wouldn't use ChatGPT to lift the burden of empty and pointless work?—yet Hofstadter’s comment feels right to me. But after a day of thinking, the apparent contradiction disappeared:

Like Hofstadter, I live in a somewhat “purely thoughtful, intellectual environment,” abstracted from the emptiness of “corporate nonsense.” My professional career has been an incessant effort to not be absorbed into it. That’s why I never really saw the need to use ChatGPT. That’s why I couldn’t understand just how useful—life-saving even—it is for so many people.

Join the conversation